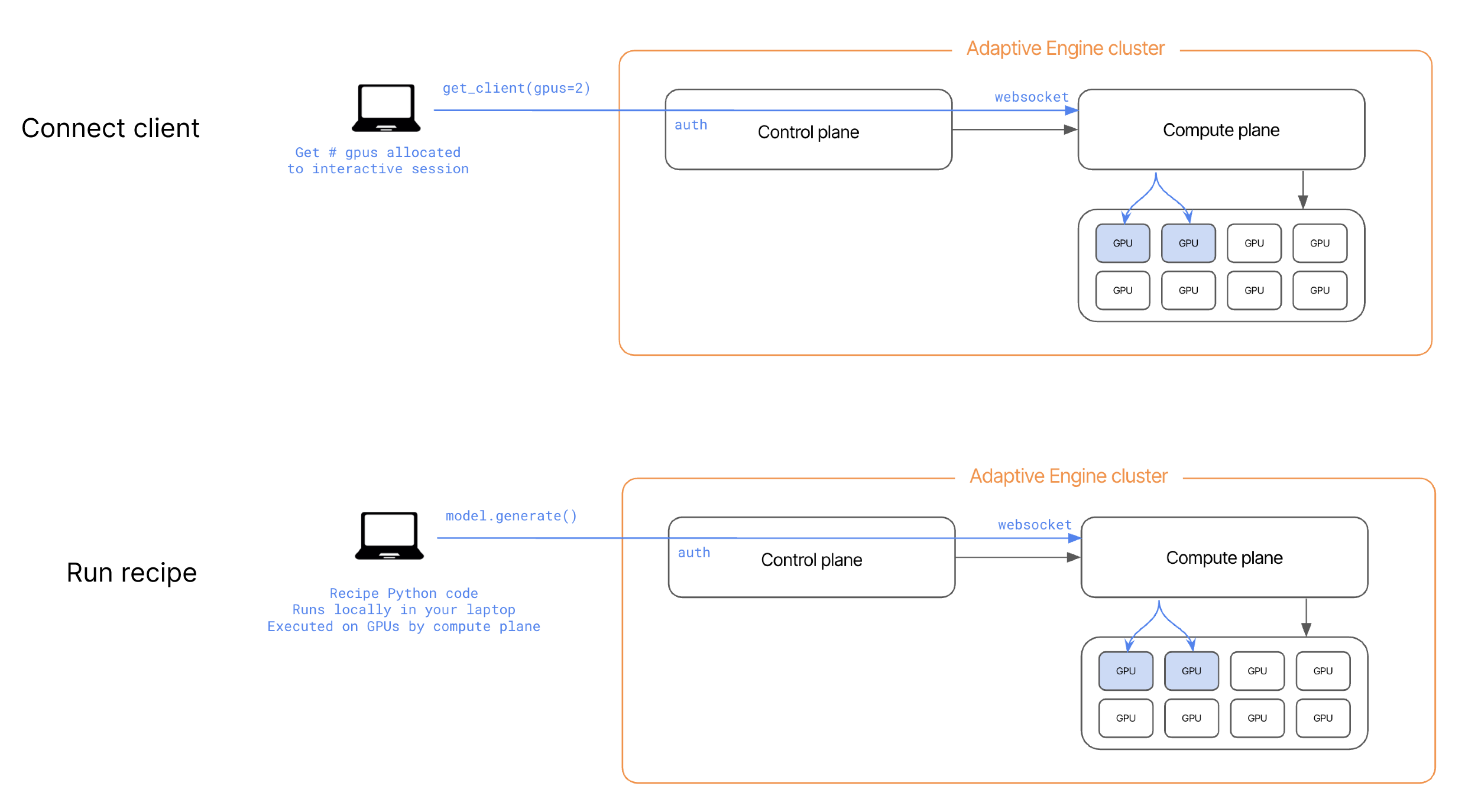

adaptive_harmony allows you to establish a direct connection via secure websockets between your local environment and the compute plane of your Adaptive Engines’ deployment. When you instantiate a RecipeContext, a desired number of GPU’s is allocated directly to you as an interactive session. These GPU’s are freed to run other workloads or interactive sessions as soon as the local python process holding that context in memory is killed.

RecipeConfigCli to avoid hardcoding secrets in python and instead load them from environment variables (all arguments can be overridden with ADAPTIVE_<INPUT_ARG>, for example ADAPTIVE_HARMONY_URL.)

If you use

adaptive_harmony in a Jupyter Notebook, you can directly await async methods like .from_config() in a Jupyter cell, no need to use asyncio.spawn method to spawn a model on GPU, you’ll get back a new handle in Python to a remote model, such as TrainingModel or InferenceModel, which you can also call methods on (such as .generate(), .train_grpo(), .optim_step(), etc.). This create a hybrid development environment, where you can step through python recipe code locally in your IDE, but have powerful compute resources execute the methods that require them.

RecipeContext is created and configured automatically at recipe launch and passed to the recipe as an input argument (ctx).